Simon Willison

简介

Creator @datasetteproj, co-creator Django. PSF board. Hangs out with @natbat. He/Him. Mastodon: https://t.co/t0MrmnJW0K Bsky: https://t.co/OnWIyhX4CH

平台

内容历史

How we contain Claude across products

How we contain Claude across products A complaint I often have about sandboxing products is that they are rarely thoroughly documented, and in the absence of detailed documentation it's hard to know how much I can trust them. Anthropic just published a fantastic overview of how their various sandbox techniques work across Claude.ai, Claude Code, and Cowork. We constrain where and how an agent can act with process sandboxes, VMs, filesystem boundaries, and egress controls. The goal is to set a hard boundary on what an agent can reach. For example, if credentials never enter the sandbox, they can't be exfiltrated, regardless of whether the cause is a user, a model finding a “creative” path, or an attacker. Claude.ai uses gVisor. Claude Code, run locally, uses Seatbelt on macOS and Bubblewrap on Linux. Claude Cowork runs a full VM (Apple's Virtualization framework on macOS, HCS on Windows). There's a lot in here, including some interesting stories of risks they missed such as t...

Running Python ASGI apps in the browser via Pyodide + a service worker

Research: Running Python ASGI apps in the browser via Pyodide + a service worker Datasette Lite is my version of Datasette that runs entirely in the browser using Pyodide in WebAssembly. When I first built it four years ago I used Web Workers and code that intercepts navigation operations and fetches the generated HTML by running the Python app. This worked, but had the disadvantage that any JavaScript in <script> tags would not be executed - breaking some Datasette functionality and a whole lot of Datasette plugins. This morning I set Claude Opus 4.8 the task (in Claude Code for web) of figuring out how to run Python ASGI apps in Pyodide using Service Workers instead, and it seems to work! Here's a basic ASGI FastCGI demo and here's a demo that runs Datasette 1.0a31. I'm still getting my head around exactly how it works, but once I've done that I plan to upgrade Datasette Lite itself. Tags: javascript, python, datasette, asgi, service-w...

Activity on simonw/research

simonw contributed to simonw/research

simonw contributed to simonw/research

View on GitHubI Am Retiring from Tech to Live Offline

I Am Retiring from Tech to Live Offline I've seen a lot of posts on forums from people threatening to quit their careers over AI. This is not one of those: Chad Whitacre is taking concrete steps, starting with this typewritten, scanned letter I'm retiring from tech. Well, "retiring" is euphemistic. I'm stepping away from tech, and that includes Open Source. [...] AI was the last straw. Have you heard of that island off India where the indigenous population kills any outsiders fool-hardy enough to land? They are doing the rest of us a favor by preserving a way of life we may need again someday, or at the very least should not want to see completely extinguished. A reminder. Never forget your roots. Here in Pennsylvania we have the Amish performing a similar function. Significantly less hostile, though still set apart, they bear witness to what was normal for all of us a couple short centuries ago: horse and buggy, wood stoves and lanterns. My intent is to be AI Amish, which me...

Activity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubActivity on simonw/datasette

simonw labeled an issue in datasette

simonw labeled an issue in datasette

View on GitHubActivity on simonw/datasette

simonw labeled an issue in datasette

simonw labeled an issue in datasette

View on GitHubActivity on simonw/datasette

simonw labeled an issue in datasette

simonw labeled an issue in datasette

View on GitHubQuoting Daniel Jalkut

My take on AI is, essentially, everybody who’s against it is too against it and everybody who’s for it is too for it. — Daniel Jalkut, via John Gruber Tags: ai

Activity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubRe I regret having missed that "harder to justify" line from my original quote, the paragraph it's embedded in was a little bit mangled but I should have worked harder to edit that in

Activity on simonw/datasette.io

simonw contributed to simonw/datasette.io

simonw contributed to simonw/datasette.io

View on GitHubdatasette 1.0a31

Release: datasette 1.0a31 Another significant alpha release, with two new headline features. Datasette now offers users with the necessary permissions the ability to both execute write queries against their database and to save stored queries (renamed from "canned queries") both privately and for use by other members of their Datasette instance. There's more detail in SQL write queries and stored queries in Datasette 1.0a31 on the Datasette blog, which now has three posts introducing new features since the blog launched two weeks ago. Here's an animated demo from the blog post showing how the new execute query interface lets people get started with templated insert/update/delete queries from tables they have permission to edit: Tags: projects, sql, sqlite, datasette, annotated-release-notes

Released simonw/datasette

simonw released 1.0a31 at simonw/datasette

simonw released 1.0a31 at simonw/datasette

Activity on simonw/datasette

simonw closed an issue in datasette

simonw closed an issue in datasette

View on GitHubActivity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

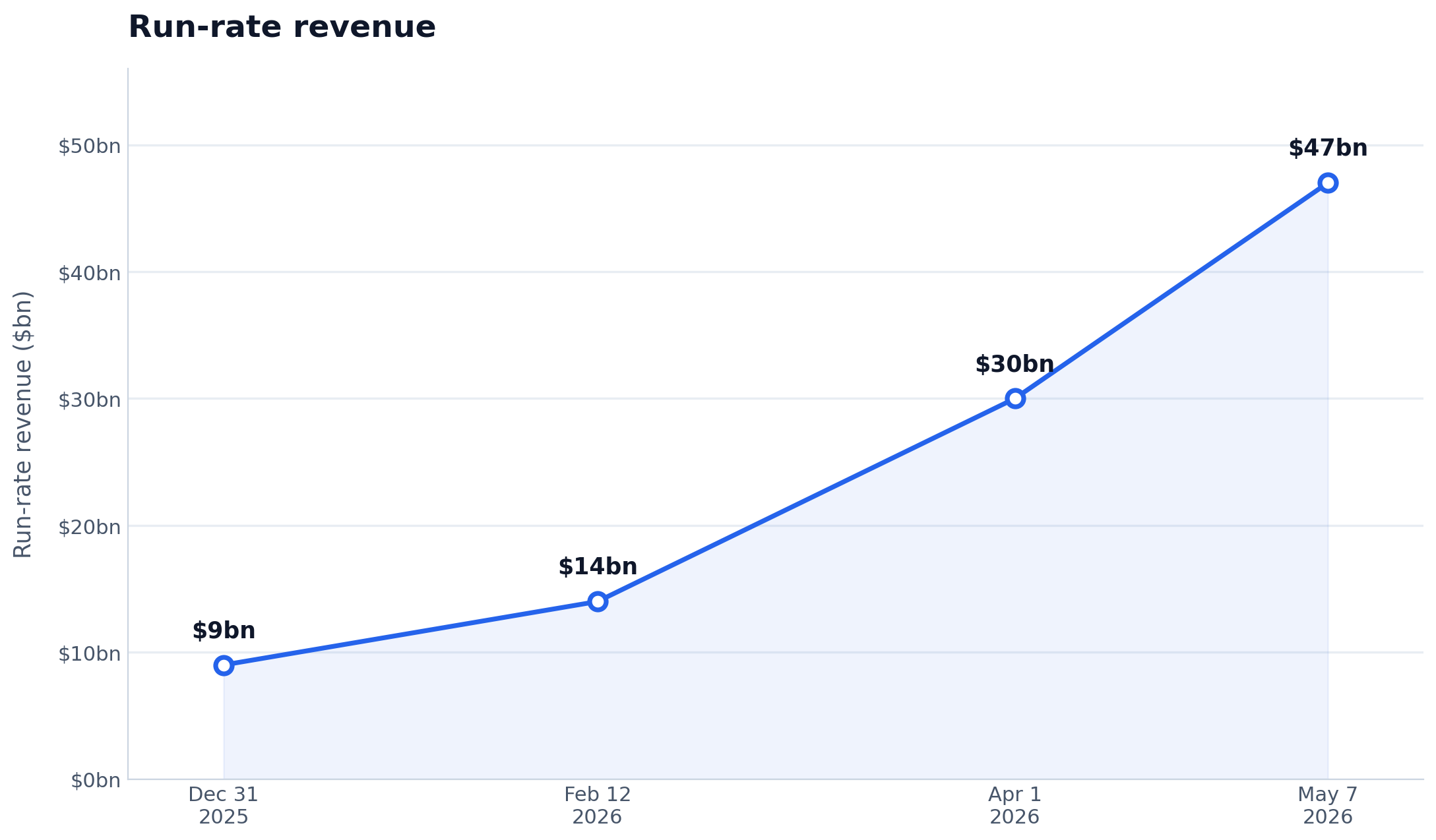

View on GitHubAnthropic's self-reported run-rate revenue growth is wild - Axios @JimVandeHei said he could not find "any company — in any industry, in any era — that has scaled organic revenue this quickly at this level as Anthropic" when they were at $30B, and now they're at $47B! https://simonwillison.net/2026/May/29/anthropic/

Anthropic's run-rate revenue hits $47 billion

The most interesting thing about Anthropic's $65B Series H announcement is this line (emphasis mine): Since our Series G in February, adoption has continued to grow across global enterprise customers, and our run-rate revenue crossed $47 billion earlier this month. Anthropic have made a bit of a habit of sharing their "run-rate revenue" in this kind of announcement, which is an annualized projection of their current revenue - typically calculated by taking the most recent month and multiplying by 12. Earlier this year: Apr 6, 2026 in Anthropic expands partnership with Google and Broadcom: "Our run-rate revenue has now surpassed $30 billion—up from approximately $9 billion at the end of 2025." Feb 12, 2026 in Anthropic raises $30 billion in Series G: "Today, our run-rate revenue is $14 billion, with this figure growing over 10x annually in each of those past three years." I had Claude Opus 4.8 make me this chart using Matplotlib (Claude: "a data line chart is more straightfo...

Notes on Claude Opus 4.8, plus pelicans riding bicycles for each of the five different thinking efforts https://simonwillison.net/2026/May/28/claude-opus-4-8/

Claude Opus 4.8: "a modest but tangible improvement"

Anthropic shipped Claude Opus 4.8 today. My favourite thing about it is this note in the release announcement: Users will find Opus 4.8 to be a modest but tangible improvement on its predecessor. There’s still more to be done: we’re working on developing and releasing models that provide many of the same capabilities as Opus at a lower cost. It's so refreshing to see an AI lab honestly describe a release as a minor incremental improvement over the previous model! Honesty seems to be a theme. Here's my other favorite note from that announcement: One of the most prominent improvements in Opus 4.8 is its honesty. We train all our models to be honest---for instance, to avoid making claims that they can't support. But a general problem with AI models is that they sometimes jump to conclusions, confidently claiming to have made progress in their work despite the evidence being thin. Early testers report that Opus 4.8 is more likely to flag uncertainties about its work and l...

Released simonw/llm-anthropic

simonw released 0.25.1 at simonw/llm-anthropic

simonw released 0.25.1 at simonw/llm-anthropic

llm-anthropic 0.25.1

Release: llm-anthropic 0.25.1 New model: Claude Opus 4.8 (claude-opus-4.8). New -o fast 1 option for fast mode, for organizations with that feature enabled on their account. Default max_tokens for each model now defaults to that model's maximum output rather than 8,192. #72 See also my notes on Opus 4.8 - I used this new release of llm-anthropic to generate the pelicans.

Activity on simonw/llm-anthropic

simonw commented on an issue in llm-anthropic

simonw commented on an issue in llm-anthropic

View on GitHubActivity on simonw/llm-anthropic

simonw labeled an issue in llm-anthropic

simonw labeled an issue in llm-anthropic

View on GitHubActivity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubActivity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubActivity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubmarkdown-svg-renderer

Tool: markdown-svg-renderer A slightly customized Markdown rendering tool with special treatment for fenced code SVG blocks - it both renders the image and provides a tab for switching to the code view. You can paste in Markdown or give it a URL to a CORS-enabled Markdown file or Gist. Here's an example where it loads a Markdown file full of LLM pelican logs for Opus 4.8. Tags: svg, tools, markdown, cors

sqlite AGENTS.md

sqlite AGENTS.md SQLite gained an AGENTS.md file five days ago - but it's not intended for their own development, it's presumably aimed at people who are pointing agents at the SQLite codebase. It includes: SQLite does not accept pull requests without prior agreement and/or accompanying legal paperwork that places the pull request in the public domain. However, the human SQLite developers will review a concise and well-written pull request as a proof-of-concept prior to reimplementing the changes themselves. SQLite does not accept agentic code. However the project will accept agentic bug reports that include a reproducible test case. Patches or pull requests demonstrating a possible fix, for documentation purposes, are welcomed. The most recent commit to that file removed "(currently)" from "SQLite does not (currently) accept agentic code", with the commit message "Strengthen the statement about not accepting agentic code". Meanwhile the SQLite forum was being flooded with s...

Given the recent burst of activity around enterprise pricing and contracts, I think April 2026 was the month when both OpenAI and Anthropic found product-market fit https://simonwillison.net/2026/May/27/product-market-fit/

I think Anthropic and OpenAI have found product-market fit

Anthropic are strongly rumored to be about to have their first profitable quarter. Stories are circulating of companies surprised at how expensive their LLM bills are becoming from usage by their staff. I think this is because OpenAI and Anthropic have both found product-market fit. Enterprise customers are now paying API prices I think they've found product-market fit And they're ramping up The AI-failure stories around this are pretty thin We also know the labs are spending a lot API revenue is becoming less important April is a new inflection point Enterprise customers are now paying API prices I currently subscribe to the $100/month Max plan from Anthropic and the $100/month Pro plan from OpenAI. If you are a heavy user of coding agents these plans are a fantastic deal. I just ran the ccusage tool on my laptop to get an estimate of how much I would have spent if I were to pay for API tokens in the past 30 days and got: $1,199.79 for Anthrop...

Quoting Kyle Ferrana

PICARD: Data, shields up DATA: Brilliant! Shields can reduce damage we sustain. Not immunity. Not hubris. Just prudence. It's not precaution—it's strategy. [camera shakes] WORF: HULL BREACHES ON NINE DECKS DATA: Here's what happened: you told me to raise shields, and I didn't — Kyle Ferrana, @KyleTrainEmoji Tags: ai-misuse, coding-agents, ai, llms

The pressure

The pressure Daniel Stenberg on the unprecedented level of pressure the curl team are facing right now thanks to the deluge of (credible) AI-assisted security issues being reported. The rate of incoming security reports is 4-5 times higher than it was in 2024 and double the speed of 2025 -- meaning that on average we now get more than one report per day. The quality is way higher than ever before. The reports are typically very detailed and long. [...] For the first time in my life, my wife voiced concerns about my work hours and my imbalanced work/life situation. I work more than I’ve done before, but the flood keeps coming. [...] This is a never-before seen or experienced pressure on the curl project and its security team members. An avalanche of high priority work that trumps all other things in the project that is primarily mental because we certainly could ignore them all if we wanted, but we feel a responsibility, we have a conscience and we are proud about our work. T...

RT Kyle 🚄 PICARD: Data, shields up DATA: Brilliant! Shields can reduce damage we sustain. Not immunity. Not hubris. Just prudence. It's not precaution—it's strategy. [camera shakes] WORF: HULL BREACHES ON NINE DECKS DATA: Here's what happened: you told me to raise shields, and I didn't

Microsoft Copilot Cowork Exfiltrates Files

Microsoft Copilot Cowork Exfiltrates Files The biggest challenge in designing agentic systems continues to be preventing them from enabling attackers to exfiltrate data. In this case Microsoft Copilot Cowork (yes, that's a real product name) was allowing agents to send emails to the user's own inbox without approval... but those messages were then displayed in a way that could leak data to an attacker via rendered images: Because these messages can contain external images that trigger network requests to external websites, data can be exfiltrated when a user opens a compromised message sent by the agent. Since OneDrive can create pre-authenticated download links, a successful prompt injection could cause those links to be leaked, allowing files to be downloaded by the attacker. Via Hacker News Tags: microsoft, security, ai, prompt-injection, generative-ai, llms, exfiltration-attacks, lethal-trifecta

Quoting Paul Graham

A lot of the emails I get from founders are now written in a hard-hitting journalistic style. I know they're written by AI, because no founder ever wrote this way before. And once you realize something is written by AI, it's hard not to ignore it. I have never knowingly finished reading an email signed by a human but written by AI. It feels like being lied to, and who would stand for that? [...] It makes me think less of the author. It means they can't write well unaided (or feel they can't), and that they're trying to trick me. It's not impressive to use AI to write stuff for you; any teenager can do that. — Paul Graham Tags: writing, ai-misuse, paul-graham, generative-ai, ai, llms

Quoting Corey Quinn

I cannot believe I'm saying this, but getting the literal Pope to canonize your product's specific technical limitations as a spiritual treatise is the single greatest act of vendor lobbying I have ever seen. — Corey Quinn, on Anthropic co-founder Christopher Olah's influence on Magnifica Humanitas Tags: ai-ethics, corey-quinn, anthropic, ai

Notes on Pope Leo XIV's encyclical on AI

Dropped this morning by the Vatican: Magnifica Humanitas of His Holiness Pope Leo XIV on Safeguarding the Human Person in the Time of Artificial Intelligence. This is a very interesting document. It's some of the clearest writing I've seen on the ethics of integrating AI into modern society. Pope Leo XIV chose the name Leo in honor of Pope Leo XIII, who is known for his 1891 Rerum novarum encyclical on "Rights and Duties of Capital and Labor". This story on Vatican News further clarifies the significance of that decision: Meeting with the College of Cardinals for their first formal encounter after his election, Pope Leo XIV explained part of the reason for the choice of his papal name. "There are different reasons for this," he said, before going on to explain that he chose the name Leo "mainly because Pope Leo XIII, in his historic encyclical Rerum novarum addressed the social question in the context of the first great industrial revolution." "In our own day," he continu...

California Brown Pelican, Snowy Egret, California Sea Lion, Harbor Seal

California Brown Pelican, Snowy Egret, California Sea Lion, Harbor Seal, in San Mateo County, CA, USWe took our new folding kayak out in the harbor and saw sea lions and harbor seals chilling on the docks.

Given how much of the original "bottle of water per generated email" water estimate came from guesses at the architecture of GPT-4, it would be very much in @OpenAI's interest to publish the architecture of that now-retired, three year old model

RT Paul Graham Re I have never knowingly finished reading an email signed by a human but written by AI. It feels like being lied to, and who would stand for that?

Re Plugins can also affect the empty state of the new menu - the latest datasette-agent adds a form for kicking off a new agent conversation - live demo (if you sign in with GitHub) on https://agent.datasette.io/

datasette 1.0a30

Release: datasette 1.0a30 The big new feature in this alpha is a new customizable "Jump to..." menu, described in detail in The extensible "Jump to" menu in Datasette 1.0a30 on the Datasette blog. You can try it out by hitting / on latest.datasette.io - it looks like this: The new jump_items_sql() plugin hook allows plugins to add their own items to the set that's searched by the plugin. Tags: projects, datasette, annotated-release-notes

datasette-agent 0.1a4

Release: datasette-agent 0.1a4 Taking advantage of the new makeJumpSections() JavaScript plugin hook added in Datasette 1.0a30, datasette-agent now presents this "Start a new agent chat" interface as part of the Jump to menu, any time you hit /: You can try this out by signing into agent.datasette.io using your GitHub account. Tags: datasette, datasette-agent

datasette-fixtures 0.1a0

Release: datasette-fixtures 0.1a0 One of the smaller features in Datasette 1.0a30 is this: New documented datasette.fixtures.populate_fixture_database(conn) helper for creating the fixture database tables used by Datasette's own tests, intended for plugin test suites. This new plugin takes advantage of that API. You can try it out using uvx without even installing Datasette like this: uvx --prerelease=allow \ --with datasette-fixtures datasette \ --get /fixtures/roadside_attractions.json Which outputs: { "ok": true, "next": null, "rows": [ {"pk": 1, "name": "The Mystery Spot", "address": "465 Mystery Spot Road, Santa Cruz, CA 95065", "url": "https://www.mysteryspot.com/", "latitude": 37.0167, "longitude": -122.0024}, {"pk": 2, "name": "Winchester Mystery House", "address": "525 South Winchester Boulevard, San Jose, CA 95128", "url": "https://winchestermysteryhouse.com/", "latitude": 37.3184, "longitude": -121.9511}, {"pk": 3, "name": "Bu...

Quoting Armin Ronacher

The most frustrating failure mode right now is that people submit issues that are not in their own voice. They contain an observed problem somewhere, but it has been thrown into a clanker and the clanker reworded it and made a huge mess of it. Typically, it was prompted so badly that the conclusions produced are more often than not inaccurate but always full of confidence. The result is complete guesswork on root causes, fake-minimal repros, suggested implementation strategies, analogies to adjacent but often the wrong code, and long lists of error classes that might or might not matter. [...] So at least personally, I increasingly want issue reports to be condensed to what the human actually observed: I ran this command. I expected this to happen. This happened instead. Here is the exact error or log. — Armin Ronacher, on slop issues filed against Pi Tags: ai, github-issues, llms, ai-ethics, open-source, coding-agents, generative-ai, armin-ronacher, pi, slop

Mad House — Usborne Creepy Computer Games

Tool: Mad House — Usborne Creepy Computer Games Via Hacker News I learned that UK publisher Usborne published free PDFs of their 1980s Computer Books, some of which I remember working through on my Commodore 64 as a child. These were so great! Beautifully illustrated books with fun projects made up of code you could type into your own machine. I remember playing "Mad House" typed in from the 1983 book "Creepy Computer Games", so I fed that PDF into Claude and had it build an interactive version of that game in JavaScript and HTML: Build a vanilla JS artifact that exactly recreates the game Mad House from this book, make sure it's mobile friendly and has a suitable retro aesthetic Credit the book title and link to https://usborne.com/us/books/computer-and-coding-books Tags: computer-history, games, tools

On the <dl>

On the <dl> I learned a few new-to-me things about the <dl> element from this article by Ben Meyer: A <dt> can be followed by multiple <dd> You can optionally group the <dt> and <dd> elements in a <div> for styling - but only a <div>. You can label them using ARIA. They've been called "description lists", not "definition lists", since an HTML5 draft in 2008. So this is valid: <h2 id="credits">Credits</h2> <dl aria-labelledby="credits"> <div> <dt>Author</dt> <dd>Jeffrey Zeldman</dd> <dd>Ethan Marcotte</dd> </div> </dl> Here's a useful note from Adrian Roselli on screen reader support for description lists. Via Hacker News Tags: css, html, screen-readers, web-standards

The memory shortage is causing a repricing of consumer electronics

The memory shortage is causing a repricing of consumer electronics David Oks provides the clearest explanation I've seen yet of why consumer products that use memory are likely to get significantly more expensive over the next few years. The short version is that memory manufacturers - of which there are just three remaining large companies - have a fixed capacity in terms of how many wafers they can process at any one time. This fixed wafer capacity is then split between DDR - used in desktops and servers, LPDDR - used in mobile phones and low-energy devices, and HBM - used with GPUs. Until recently, HBM got just 2% of that wafer allocation. The enormous growth in AI data centers has pushed that up to an expected 20% by the end of 2026, and "a single gigabyte of HBM consumes more than three times the wafer capacity that a gigabyte of DDR or LPDDR does". Memory companies have learned from the extinction of their rivals that you should always under-provision rather than over-pr...

FTC to Require Cox Media Group, Two Other Firms to Pay Nearly $1 Million to Settle Charges They Deceived Customers About “Active Listening” AI-Powered Marketing Service

FTC to Require Cox Media Group, Two Other Firms to Pay Nearly $1 Million to Settle Charges They Deceived Customers About “Active Listening” AI-Powered Marketing Service Back in 2024 Cox Media Group were caught trying to sell advertisers packages based on "active listening", with this deck which claimed: Smart devices capture real-time intent data by listening to our conversations Advertisers can pair this voice-data with behavioral data to target in-market consumers I wrote about this in September 2024. My theory: I think active listening is the term that the team came up with for “something that sounds fancy but really just means the way ad targeting platforms work already”. Then they got over-excited about the new metaphor and added that first couple of slides that talk about “voice data”, without really understanding how the tech works or what kind of a shitstorm that could kick off when people who DID understand technology started paying attention to their marketing. ...

Datasette Agent

We just announced the first release of Datasette Agent, a new extensible AI assistant for Datasette. I've been working on my LLM Python library for just over three years now, and Datasette Agent represents the moment that LLM and Datasette finally come together. I'm really excited about it! Datasette Agent provides a conversational interface for asking questions of the data you have stored in Datasette. Add the datasette-agent-charts plugin and it can generate charts of your data as well. The demo The announcement post (on the new Datasette project blog) includes this demo video: I recorded the video against the new agent.datasette.io live demo instance, which runs Datasette Agent against example databases including the classic global-power-plants by WRI, and a copy of the Datasette backup of my blog. The live demo runs on Gemini 3.1 Flash-Lite - it's cheap, fast and has no trouble writing SQLite queries. A question I asked in the demo was: when did Simon most...

datasette-agent-sprites 0.1a0

Release: datasette-agent-sprites 0.1a0 A Datasette Agent plugin for running commands in a Fly Sprites sandbox. Tags: sandboxing, datasette, fly, datasette-agent

datasette-agent-charts 0.1a2

Release: datasette-agent-charts 0.1a2 "View SQL query" buttons below rendered charts. Tags: datasette, datasette-agent

datasette-agent 0.1a3

Release: datasette-agent 0.1a3 "View SQL query" buttons for both visible tables and collapsed SQL result tool calls. Don't display empty reasoning chunks Improved handling of truncated responses - table still displays to the user even if the SQL results were truncated when showing the agent. See Datasette Agent, an extensible AI assistant for Datasette. Tags: datasette, datasette-agent

Committed to simonw/datasette.io

simonw commented on commit simonw/datasette.io@75d18c2ab9

simonw commented on commit simonw/datasette.io@75d18c2ab9

View on GitHubQuoting SpaceX S-1

We have the ability to use compute resources to support our proprietary AI applications (such as Grok 5, which is currently being trained at COLOSSUS II), while also providing access to select compute capacity to third-party customers. For example, in May 2026, we entered into Cloud Services Agreements with Anthropic PBC (“Anthropic”), an AI research and development public benefit corporation, with respect to access to compute capacity across COLOSSUS and COLOSSUS II. Pursuant to these agreements, the customer has agreed to pay us $1.25 billion per month through May 2029, with capacity ramping in May and June 2026 at a reduced fee. The agreements may be terminated by either party upon 90 days’ notice. — SpaceX S-1, highlights mine Tags: anthropic, grok, generative-ai, ai, llms

Activity on simonw/datasette

simonw commented on an issue in datasette

simonw commented on an issue in datasette

View on GitHubActivity on simonw/datasette

simonw closed an issue in datasette

simonw closed an issue in datasette

View on GitHub